Green and full of terrors

If you want to get a health story into the papers it helps if it sounds controversial and it especially helps if it tells people they can eat food they want to eat: thus the frequency of stories about chocolate, wine, and beer.

This week’s version is a not-very-detailed abstract from a conference in the US that purportedly says healthy food gives you lung cancer. And when I say that’s what the study purportedly shows, I mean:

- MSN: Eating fruits, vegetables and whole grains may increase chance of early onset lung cancer

- The Independent: Eating more fruits and vegetables could put you at risk for this cancer

- Newsweek: Fruits and Vegetables May Increase Your Cancer Risk, New Research Shows

- and even the researchers’ own press release: Eating fruits, vegetables and whole grains may increase chance of early onset lung cancer

One exception is Ars Technica, headlining the story as Absurd study suggests eating fruits and vegetables leads to cancer.

The press release says

“Our research shows that younger non-smokers who eat a higher quantity of healthy foods than the general population are more likely to develop lung cancer,” said Jorge Nieva, MD,

and, no, it really doesn’t show that. For a start, we shouldn’t really be advertising health advice to the general public based on just a conference abstract, with so little detail. In this case even the limited detail we have is enough to say that this shouldn’t be a big health story.

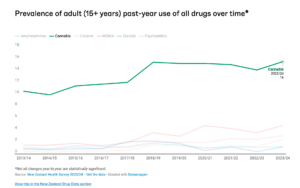

The research is part of a project to study lung cancer in younger people who don’t smoke. It used to be that nearly all lung cancer cases were in older people who had been smokers, but one of the victories of global public health is to reduce the number of cases like this. Clearly, if someone has lung cancer at age 30 it isn’t because they’ve been smoking for fifty years — and in fact, many of them haven’t smoked at all. So, there’s interest in studying what causes their lung cancer. The Epidemiology of Young Lung Cancer study wants to look at genetic attributes and environmental risk factors for lung cancer before age 40.

Finding a control group is hard. You can’t just recruit a whole bunch of young people and see who gets lung cancer, because it’s an very rare disease: you won’t find anyone. You need to look for diagnosed cases, but then you need to decide who to compare them to. In this research the people with lung cancer were compared to people in a big national survey series, NHANES, which asks about diet.

The primary reported finding is that young people diagnosed with lung cancer had healthier diets (according to one measure) than the average of the US population. The researchers don’t say they expected to see this, and my guess is they didn’t. Their theory is that pesticides — in some generic holistic sense — are responsible. It’s obviously not impossible that pesticides could be carcinogenic, but this doesn’t seem like a very good way to find out. In particular, while the people in the study all have lung cancer, they don’t all have similar mutations in their tumours — they don’t have the same sort of lung cancer — and there’s literally zero actual data on pesticides, just an assumption that they’re present in healthier food, so this isn’t picking out some sort of ultra-selective cancer effect.

Everything here is correlations, but better correlational studies with controls consistently find that people with lung cancer eat less of the fruit and vegetables and high-fibre foods than people without lung cancer (eg here). There’s a theoretical argument that a diet high in anti-oxidants might reduce the body’s ability destroy cancer, but you wouldn’t look at a small, unusual subset of lung cancers to study this question. There are perfectly good alternative reasons why the young lung cancer patients might have healthier diets than the US average. They’re young, for a start. They have had their cancers diagnosed early enough to end up in a study like this one, which will correlate with income and interest in medical science. They’re non-smokers.

If unreliable evidence of a healthier diet in a subset of people with lung cancer is taken as evidence of harm from pesticides, should we take evidence of a less healthy diet in other groups of people with serious illness as evidence that pesticides are beneficial?