Reeling them in

Q: One News says fishing can improve your mental health!

A: That sounds fairly plausible, actually. Did they say how they know?

Q: “research from the UK”

A: A bit non-specific, innit?

Q:

A: I think it’s this paper. The number matches (“Almost 17% less likely”) and it’s from the UK and there doesn’t seem to be a better match

Q: And people who fished more had less mental illness?

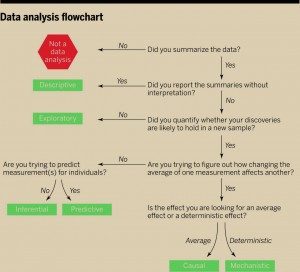

A: People who fished more often had less history of depression, suicidal thoughts, and self-harm. People who fished longer had more suicidal thoughts.

Q: How often did people have to fish to be in the “17% less likely” group

A: It’s not clearly described. The model in the paper actually has 17% more likely, so maybe it’s a model for “not mental health problem”. If the 17% is for a one-step difference in the survey question then it’s a surprisingly large effect of a very small difference: 5-6 times a week is a different category from 3-4 times; once every two weeks is different from once per month.

Q: Could the anglers just be healthier anyway, or richer or something? Did they collect that information?

A: They did collect it, but they didn’t use it in the analysis, at least in this paper.

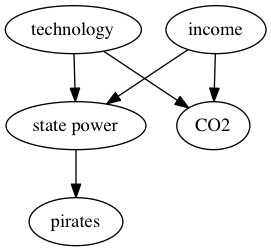

Q:

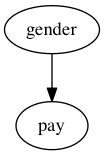

A:

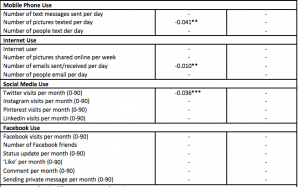

Q: How did they recruit the people?

A: “an online survey that was advertised through the Instagram, Facebook, and Twitter accounts of Angling Direct and Tackling Minds. Angling Direct also sent the survey link to their mailing list, and the link was distributed via the Anglia Ruskin University Twitter account, as well as the authors’ own networks.”

Q: That … sounds like it might not be perfectly representative

A: 98% of the respondents were men, for example. And 40% were in the top 20% of household income nationally.

Q: Would I be right in guessing that Angling Direct is some sort of fishing magazine?

A: It’s actually a chain of fishing supply stores in the UK. Claims to be the UK’s leading fishing-tackle retailer

Q: Ok, and Tackling Minds is maybe some sort of fishing education thing?

A: It’s a charity that uses fishing as a mental health intervention.

Q: Couldn’t that have some impact on the correlations between fishing and mental health in the sample?

A: Indeed it could