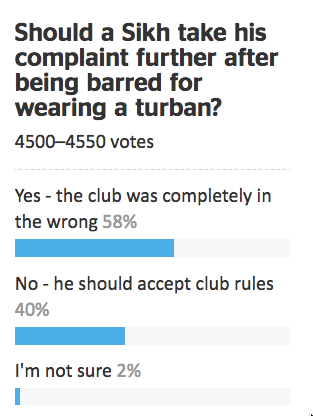

Stuff has a story about the #redpeak flag campaign, including a clicky bogus poll that currently shows nearly 11000 votes in support of the flag candidate. While Red Peak isn’t my favourite (I prefer Sven Baker’s Huihui), I like it better than the four official candidates. That doesn’t mean I like the bogus poll.

As I’ve written before, a self-selected poll is like a petition; it shows that at least the people who took part had the views they had. The web polls don’t really even show that — it’s pretty easy to vote two or three times. There’s also no check that the votes are from New Zealand — mine wasn’t, though most of them probably are. The Stuff clicky poll doesn’t even show that 11,000 people voted for the Red Peak flag.

So far, this Stuff poll at least hasn’t been treated as news. However, the previous one has. At the bottom of one of the #redpeak stories you can read

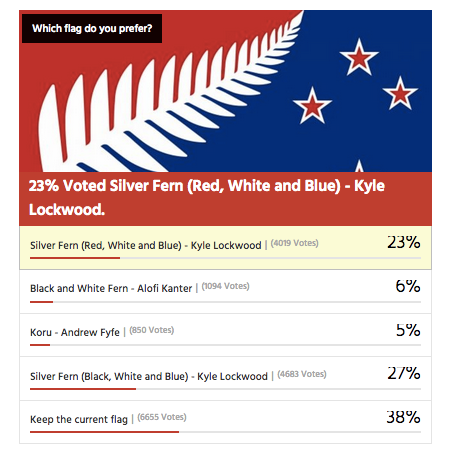

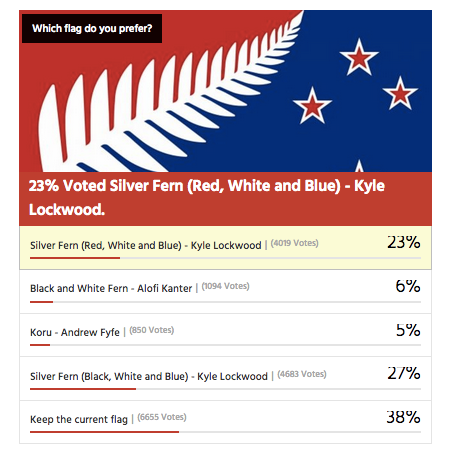

In a Stuff.co.nz poll of 16,890 readers, 39 per cent of readers voted to keep the current flag rather than change it.

Kyle Lockwood’s Silver Fern (black, white and blue) was the most popular alternate flag design, with 27 per cent of the vote, while his other design, Silver Fern (red, white and blue), got 23 per cent. This meant, if Lockwood fans rallied around one of his flags, they could vote one in.

Flags designed by Alofi Kanter – the black and white fern – and Andrew Fyfe each got 6 per cent or less of the vote

They don’t say, but that looks very much like this clicky poll from an earlier Stuff flag story, though it’s now up to about 17500 votes

You can’t use results from clicky polls as population estimates, whether for readers or the electorate as a whole. It doesn’t work.

Over approximately the same time period there was a real survey by UMR (PDF), which found only 52% of people preferred their favourite among the four flags to the current flag. The referendum looks a lot closer than the clicky poll suggests.

The two Lockwood ferns were robustly the most popular flags in the survey, coming in as the top two for all age groups; men and women; Māori; and Labour, National and Green voters. Red Peak was one of the four least preferred in every one of these groups.

Only 1.5% of respondents listed Red Peak among their top four. Over the whole electorate that’s still about 45000, which is why an online petition with 31000 electronic signatures should have about the impact it’s going to have on the government.

Depending on turnout, it’s going to take in the neighbourhood of a million supporting votes for a new flag to overturn the current flag. It’s going to take about the same number of votes ranking Red Peak higher than the Lockwood ferns for it to get on to the final ballot.

In the Stuff story, Graeme Edgeler suggests “Perhaps if there were a million people in a march” would be enough to change the government’s mind. He’s probably right, though I’d say a million estimated from a proper survey, or maybe fifty thousand in a march should be enough. For an internet petition, perhaps two hundred thousand might be a persuasive number, if there was some care taken that they were distinct people and eligible voters.

For those of us in a minority on flag matters, Andrew Geddis has a useful take

In fact, I’m pretty take-it-or-leave-it on the whole point of having a “national” flag. Sure, we need something to put up on public buildings and hoist a few times at sporting events. But I quite like the fact that we’ve got a bunch of other generally used national symbols that can be appropriated for different purposes. The silver fern for putting onto backpacks in Europe. The Kiwi for our armed forces and “Buy NZ Made” logos. The Koru for when we’re feeling the need to be all bi-cultural.

If you like Red Peak, fly it. At the moment, the available data suggest you’re in as much of minority as me.